By David Shepardson

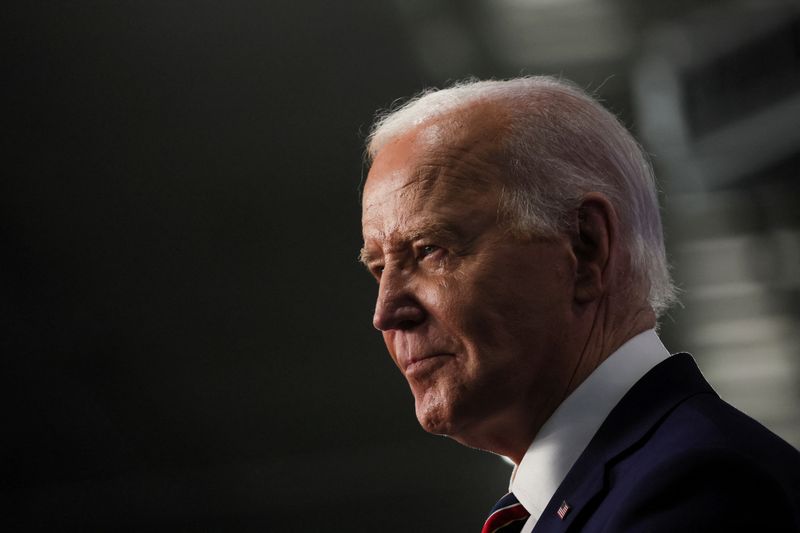

(Reuters) - A Louisiana Democratic political consultant was indicted over a fake robocall imitating U.S. President Joe Biden seeking to dissuade people from voting for him in New Hampshire's Democratic primary election, the New Hampshire Attorney General's Office said on Thursday.

Steven Kramer, 54, faces 13 charges of felony voter suppression and 13 misdemeanor impersonation of a candidate charges after thousands of New Hampshire residents received a robocall message asking them not to vote until November. Kramer faces a series of initial court appearances starting on June 14 in Merrimack Superior Court.

A lawyer for Kramer could not immediately be identified. Kramer did not respond to a request seeking comment.

Kramer told CBS and NBC in February he had paid $500 to have the calls sent to voters to call attention to the issue, after the calls were discovered in January. He had worked for Biden's primary challenger, Representative Dean Phillips, who denounced the calls

Separately, the Federal Communications Commission on Thursday proposed to fine Kramer $6 million over the robocalls it said were using an AI-generated deepfake audio recording of Biden’s cloned voice, saying its rules prohibit transmission of inaccurate caller ID information.

"When a caller sounds like a politician you know, a celebrity you like, or a family member who is familiar, any one of us could be tricked into believing something that is not true with calls using AI technology," FCC Chair Jessica Rosenworcel said.

The FCC also proposed to fine Lingo Telecom $2 million for allegedly transmitting the robocalls.

There is growing concern in Washington that AI-generated content could mislead voters in the November presidential and congressional elections. Some senators want to pass legislation before November that would address AI threats to election integrity.

"New Hampshire remains committed to ensuring that our elections remain free from unlawful interference and our investigation into this matter remains ongoing," Attorney General John Formella, a Republican, said.

Formella hopes the state and federal actions "send a strong deterrent signal to anyone who might consider interfering with elections, whether through the use of artificial intelligence or otherwise."

A Biden campaign spokesperson said the campaign "has assembled an interdepartmental team to prepare for the potential effects of AI this election, including the threat of malicious deep fakes." The team has been in place since September "and has a wide variety of tools at its disposal to address issues."

On Wednesday, Rosenworcel proposed requiring disclosure of content generated by artificial intelligence (AI) in political ads on radio and TV for both candidate and issue advertisements, but not to prohibit any AI-generated content.

The FCC said the use of AI is expected to play a substantial role in 2024 political ads. The FCC singled out the potential for misleading "deep fakes" which are "altered images, videos, or audio recordings that depict people doing or saying things that did not actually do or say, or events that did not actually occur."